COVID-19 has overhauled much of the process and business of scientific research around the globe. In the rush to get results out to the scientific community for action and implementation, the research pipeline has turned into a veritable firehose.

The novel coronavirus is generally presumed to have its origins within Wuhan, China, where, on New Year’s Eve 2020, a cluster of virus cases was reported. On Feb. 11, 2020, the World Health Organization (WHO) tweeted out the official name of the new virus as COVID-19 (twitter.com/WHO/status/1227248333871173632). Exactly 1 month later, WHO Director General, Dr. Tedros Adhanom Ghebreyesus, declared the rate of infection across the world as a pandemic (who.int/dg/speeches/detail/who-director-general-s-opening-remarks-at-the-media-briefing-on-covid-19--- 11-march-2020). This is an incredibly rapid-fire progression of events. Thus, it is hardly a surprise that research output to understand the virus, appropriate treatments and interventions, and study societal impacts has proliferated at an unprecedented rate.

A report by Digital Science, “How Covid-19 Is Changing Research Culture” (digitalscience.figshare.com/articles/How_COVID-19_is_Changing_Research_Culture/ 123 83267), gives some data about this proliferation. Digital Science’s Dimensions data base contains, as of August 2020, more than 42,000 published articles; more than 3,000 clinical trials; and hundreds of datasets, policy documents, and grants related to COVID-19. Add to that 17,000 identified individual researchers studying COVID-19 and at least $2.9M USD in grants to publicly funded global institutions.

The rapid spread of the virus has made turnaround of research results urgent. One of the most notable shifts in research culture identified in the report is the elevated role of preprint servers in pushing research output to the scientific community. “Instant” availability of publications via open access (OA) is on the rise. While the bulk of research has been medical and health-related, study of the pandemic has occurred in a wide swath of disciplines in both the hard and social sciences.

Tools for Measuring Covid-19 Research Impact

The measured impact of research proliferation related to COVID-19 is already being quantified and reported out. Interesting internet resources and publications are already identifying trends and describing the research landscape and patterns across the topic.

Covid-19 Bibliometrics

Kudos to the experts at the University of Malaya, who hopped on this definitive web address (covid19bibliometrics.org), which states that the research group maintaining the website has the following aims:

1. To update the global scientific community on current Covid-19 related published papers in an accessible, timely and trusted format

2. To analyse the published papers for trends and to provide insights

3. To identify gaps in research

4. To promote international research collaboration with a view to overcoming Covid-19 and promoting recovery

The researchers use a fairly simple Scopus keyword search from which to gather their corpus of COVID-19 research articles. From this corpus, they derive a number of interactive visualizations and subject-related alerts. The Research Trends page provides information, such as publication rates, growth, and patterns, in very well-designed interactive maps and graphs filterable by country, subject, researcher affiliation, funder, journal, document type, and top-cited articles. The Information Super Hub tab provides a collection of reputable sources of COVID-19 research, and the Research Insights section operates as a current awareness service providing summaries of research identified as being of significance. Of particular interest to researchers are the areas identified as gaps in the COVID-19 research (covid19bibliometrics.org/copy-of-social-sciences-and-policy).

Nature Index Blog

Using Dimensions data, a Nature Index blog post gives up dates on publication metrics related to COVID-19 (natureindex.com/news-blog/the-top-coronavirus-research-articles-by-metrics). The posts provide screenshots and summaries rather than interactive graphics. However, each post remains on the site, allowing you to go back and look at changes in some of the top organizations, topics, publications, and other statistics, rather than only having the most current ranking information available. The blog post links to a saved search in Dimensions that you can simply click on to replicate the strategy and see results. This is a great DIY source for slicing and dicing the COVID-19 research corpus (although the data quality of Dimensions leaves something to be desired).

Where Are Google and Web of Science in This Race?

As of this writing, I could not identify a similar dashboard or other free web resource that uses the Web of Science (WoS) bibliographic dataset to perform similar or related analyses on COVID-19 research. A representative from Clarivate, parent company of WoS, pointed me to its blog related to life sciences research intelligence (clarivate.com/cortellis/blog), but did not know of a bibliographic analysis tool or report on COVID-19 using WoS data.

Although it doesn’t measure research impact, the Google Trends COVID-19 page provides interesting data about search trends related to COVID-19 (trends.google.com/trends/story/US_cu_4Rjdh3ABAABMHM_en). This collection of interactive v isualizations and rankings covers trends in what is being searched, geographic demarcations, and deeper dives into subcategories such as symptoms, face masks, societal impact, and other topics of interest to Google searchers. As with WoS, I was unable to identify any sort of free web resource created using Google Scholar data providing bibliometric analysis.

A (Very) Modest Experiment

Although the web resources pull together a lot of information about the state of COVID-19 research worldwide, quite a bit more information exists in traditional PRJAs (peer- reviewed journal articles) and related publications. To get a rough sketch of the quantity and nature of published bibliometric analyses related to the corpus of COVID-19 re search, I did some very simple searches in PubMed, WoS, Dimensions (free version), and Scopus. This exercise also gives some clues as to the coverage of these topics in the known citation databases.

I kept the search queries very simple and erred on the side of precision, not recall. I was by no means trying to be comprehensive and used the most typical search terms for my concepts, namely, covid-19 and bibliometrics. I used these terms in subject headings where possible. If none were available, I took the approach of using keywords with field limiters (title, subject, abstract). Here is my back-of-the-envelope analysis of the results.

PubMed returned nine results, using a search of bibliometrics as a MeSH Major Topic (MeSH stands for Medical Subject Headings, PubMed’s database thesaurus), and covid-19 as a Supplementary Concept, as the term has not yet been incorporated into MeSH. It is not surprising that PubMed returned a result list in the single digits, since it is a domain-specific database related to medicine and health, while the other databases I searched are more cross-disciplinary. A simple topic search of covid-19 AND bibliometrics in WoS turned up 21 results. I have no direct access to Scopus, but thanks to Zachary Painter at Stanford University, a search of covid-19 in Scopus’s [KEY] field (searches an amalgam of controlled vocabulary and index fields) and bibliometric in general search (Tit-Auth-Abs) yielded 27 results.

In Dimensions, I did a free-text search of the two terms limited to title and abstract of the covid-19 AND bibliometrics string and turned up a walloping 73 results. There were some challenges with these findings. The 73 hits from Dimensions included 11 duplicate records, leaving 62 unique results. This likely has to do with the structure of the tool. Dimensions does not have its own curated dataset; rather, it pulls from other reliable scientific resources to obtain its content. It was fairly simple to go through and remove the duplicates by hand, but this redundancy of results could easily be problematic for researchers doing analyses on grander scales. OpenRefine or other data cleaning tools may come in handy for larger projects using Dimensions.

Once the Dimensions results were cleaned up, I aggregated the results from the four databases and removed duplicates. This resulted in 75 unique publications. Considering the vast majority of COVID-19 research was published in the current year (2020), it is rather surprising that there are already 75 unique bibliometric analyses gleaned from these cursory searches. In all likelihood, there are more studies that my searches did not pick up. That there is a body of research so large that 75 bibliometric analyses can be conducted on it within the same publication year is a testament to the proliferation rate of COVID-19 papers.

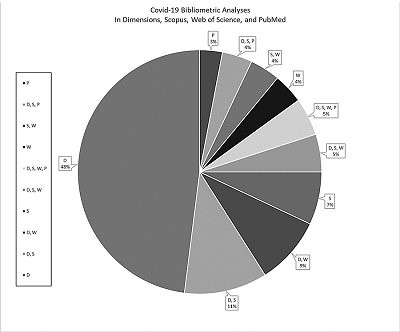

I did a chicken-scratch overlap analysis of how many appeared in multiple databases. (See my pie chart at right.) Only four publications were available in all databases searched. A small number (n=7) were likewise found in three out of four databases. (Four were in all but PubMed, three in all but WoS; no publications were found in all but Scopus or all but Dimensions.) Eighteen titles were located in two out of the four databases. (Eight were found in both Dimensions and Scopus, seven were found in Dimensions and WoS, and three were found in Scopus and WoS.)

I did a chicken-scratch overlap analysis of how many appeared in multiple databases. (See my pie chart at right.) Only four publications were available in all databases searched. A small number (n=7) were likewise found in three out of four databases. (Four were in all but PubMed, three in all but WoS; no publications were found in all but Scopus or all but Dimensions.) Eighteen titles were located in two out of the four databases. (Eight were found in both Dimensions and Scopus, seven were found in Dimensions and WoS, and three were found in Scopus and WoS.)

Just over 60% (n=46 publications) were found in only a single database. The majority of these (n=36) came from Dimensions, which indexes the preprint servers, such as medRxiv, bioRxiv, and other rapid-turnaround vehicles for getting research out and available, such as SSRN and other OA sources.

Waiting for Research to Publish

Although Dimensions can be a pain in terms of cleaning data, it helpfully returns early-release preprints in search results. If I had access to the paid version of Dimensions, the results would be even more robust. The other three databases generally focus only on peer-reviewed, formally published research, in the English language. Jeffrey Brainard in the Science blog (sciencemag.org/news/2020/05/scientists-are-drowning-covid-19-papers-can-new-tools-keep-them-afloat) indicated in May 2020 that the number of papers released on COVID-19 doubles every 20 days. The rate of output is so frenetic that Scopus and WoS updates may lag behind the current status of research findings. Fear not, however. There are a number of resources working to filter that firehose to identify COVID-19 research output that is relevant and timely.

2019 Novel Coronavirus Research Compendium (NCRC; ncrc.jhsph.edu) is a compilation of research from Johns Hopkins Bloomberg School of Public Health that seems to be curated by humans—eight topic-based teams of them, in fact. They provide an evaluative context and summary for the papers they identify, and they offer current awareness by email updates.

CORD-19 from the Allen Institute for Artificial Intelligence provides a daily updated open dataset of COVID-19 research for searching and also analysis. Check out the topical resources (semanticscholar.org/cord19) or, if you’re ambitious, down load the whole dataset and make your own magic (allenai.org/data/cord-19).

COVIDScholar (covidscholar.org), from the folks at the Lawrence Berkeley Lab, uses natural language processing to aggregate COVID-19 articles and make them available in a one-stop literature review resource. It also has a really cool “word embeddings” 3D visualization model you can play with.

Rapid Reviews: COVID 19 (rapidreviewscovid19.mitpress. mit.edu) calls itself an “access overlay journal.” It is from MIT Press and identifies timely COVID-19 preprints and sets up what it terms “cross-linked, rapid peer review.” This initial review helps to identify the basic reliability of the information in the identified papers.

Doing the Business of Research Differently

In this pandemic, research is happening differently. Many of the changes could be considered improvements to opening the research process. There has been a marked uptick in the number of free or OA resources available on COVID-19. We are seeing an increase in open peer review and crowdsourced science.

Technology is leveraged like never before. For example, use of automated processes such as machine learning, AI, and natural language processing has sped up many analyses of the impact of COVID-19 research. At the same time, the deluge of new papers could lead to a wasteful redundancy in studies. Collaboration and cross-communication across the research landscape are ever more critical. The trick will be to leverage process improvement throughout the research lifecycle after the emergency subsides and mitigate duplicative research endeavors.