FROM THE INNOVATION LAB

What a 9th-Grade Paper Can Teach Us About Fixing American Division

by Chad Mairn

| Although each side declares victory, there is no true winner. We are now left more confused than informed and more divided than enlightened. It is designed to be never-ending. This is when I turn the television off. |

I am proud to report that my 15-year-old son, Sam, received an A+ for his last history paper, in which he navigated some interesting political/sociological territory that many adults are still trying to traverse. His paper was essentially about polarization. He also cited Duverger’s law, which is the principle that winner-take-all systems like we have in the U.S. almost always devolve into a rigid two-party situation. As Sam put it, “This is a bad direction for a country to head in because it turns people against one another, making simple debates much more heated.” Sam’s analysis of our political hardware (i.e., the system) was sharp for a 9th grader. I was not familiar with Duverger’s law, so it was refreshing to have Sam explain it to me before I read his paper. Once I finished reading, it got me to think more about the software (i.e., the information) that is programmed on top of everything. If the two-party system is the foundation of our democracy, then algorithmic bias helps accelerate this division.

At its simplest level, I think of an algorithm as a recipe—step-by-step instructions designed to achieve some sort of goal. Just as a recipe takes ingredients (data) and prepares them step-by-step (the code) to produce a meal (the output), a social media algorithm, for example, takes your digital ingredients (e.g., every Like; every half-second pause, quick 10-second rewind, or fast-forward on a video; or every search for a yoga mat) and follows a sequence to produce a curated feed based primarily on the ingredients.

The problem isn’t that the recipe exists; the problem is what the recipe is designed to do. In a kitchen, a chef might optimize flavor or nutrition. In the digital world, the chef (in this case, the platform) is optimizing the recipe for engagement. If the goal is to keep you at your desk for as long as possible, the algorithm will keep serving the most addictive ingredients (i.e., the content that confirms your biases and triggers your emotions) even if they are not healthy for you to eat.

From News to Noise

Before CNN arrived in 1980, we didn’t have 24/7 news; instead, we had the nightly 6:00 p.m. news broadcast. Back then, a journalist’s job was to present objective news and facts of the day, leaving the analysis to the viewer. We were given the news and expected to form our own conclusions using critical thinking. These days, to fill 24 hours of airtime and to keep our eyes glued to screens, economic and algorithmic pressures have increasingly rewarded personal anger and public outrage over careful, reputable reporting. I should note that not all media outlets operate this way, and some actively resist it.

Today, that silence in which viewers could form their own conclusions has been replaced by noise—and lots of it. With 24/7 news outlets such as CNN or Fox News, we’re no longer given objective news—we’re given the meaning of the news before we’ve had a chance to even process it. We see panels of so-called experts who don’t listen to one another—they talk over each other, yell at each other, and dismiss opposing views. It isn’t dialogue. It’s a deafening battle, a spectacle, staged for ratings. Although each side declares victory, there is no true winner. We are now left more confused than informed and more divided than enlightened. It is designed to be never-ending. This is when I turn the television off.

Beyond news consumption, there’s also been a shift in how political identity is formed. When I look back 30-plus years, political affiliation was primarily treated as a private matter. You did not walk into a grocery store or a dinner party and announce whom you voted for. There was a shared understanding that a person’s vote was a quiet, civic duty rather than a public badge of honor—or, as we often see today, a flag or set of stickers displayed prominently on the back of one’s automobile. This privacy created a social buffer that allowed neighbors to remain friendly even if they held different beliefs. Today, that buffer is mostly gone.

When privacy dies, the middle ground Sam wrote about in his paper dies with it. When every opinion is public, changing your mind is not just a shift in thought—it can become public shaming. Most importantly, when we openly broadcast our political beliefs, we are handing the algorithm the labels it uses to build our filter bubble (i.e., a personalized algorithm that narrows content based on isolated engagement), making ourselves easier to categorize, easier to sell to, and, unfortunately, easier to turn against one another.

How Shopping Lists Become Political Identities

To understand how we get sorted into these silos, we must look at the “proxies” that algorithms use to categorize us. According to Jonker and Rogers, algorithmic bias “occurs when systematic errors in machine learning algorithms produce unfair or discriminatory outcomes” and “often reflects or reinforces existing socioeconomic, racial and gender biases.” Imagine Person A buying a yoga mat on Amazon. By itself, it is just a piece of fitness equipment. However, the algorithm cross-references this with millions of other datapoints, identifying “yoga mat buyer” as a high-probability proxy for a person with liberal-leaning values. Going further, this algorithm also infers income levels, ZIP codes, and cultural affiliations. Conversely, if Person B searches for a holster, the system likely flags them as a conservative. Once these digital labels are stuck, the personalization engine kicks in. Person A may start to see more liberal stories about, say, climate change, whereas Person B may see more conservative content such as stories about border security.

Anjelica N. Singer (2022) notes that these algorithms interpret every click, Like, and share as a signal of interest to further refine a user’s personalized “unique universe of information,” per Eli Pariser, whom Singer cites. In this case, the algorithm isn’t just a static recipe; instead, it constantly adjusts based on your last meal, so every time you engage with a post that confirms your beliefs, the algorithm interprets it as a success and then serves you another version of that same perspective the next time you visit the platform. This is where the previously mentioned filter bubble hardens and becomes difficult to pop. Because these bubbles are “devoid of attitude-challenging content,” users primarily see posts they already agree with. As Sam noted in his paper, this lack of overlap makes “simple debates much more heated,” because we no longer share a baseline of truth.

Roots of Cherry-Picking Roots of Cherry-Picking

While algorithms are new, the human tendency to cherry-pick information and seek confirmation of existing beliefs is a challenge that goes back centuries. Four hundred years ago, Francis Bacon, a father of the scientific method, argued that human understanding, once it has generated an opinion, “draws all things else to support and agree with it.” Bacon called this the “Idols of the Tribe,” comparing the human mind to a “false mirror” that distorts reality by mixing its own biases into what it sees.

For many people, changing their mind feels like betraying their own identity. John Stuart Mill (1859) warned that being a “liberal” or a “conservative” means nothing if you don’t truly understand their strongest arguments. He argued that “he who knows only his own side of the case knows little of that” and insisted that we must hear opposing views from people who believe them, in their “most plausible and persuasive form.”

The Power of Scaffolded Search

If we are to fulfill what Sam calls the “duty of a citizen to research,” we must move beyond the true-or-false verdict of traditional fact-checking. Information literacy isn’t just about spotting fake news; it’s about having the courage to change your mind. Channeling Mill, I tell my students that when facts challenge your beliefs, you should let them. A belief system grounded in truth is a fortress, whereas a belief system grounded in bias is a bubble. Doing this requires more than openness to change; it requires a new methodology suited to the digital age. Mike Caulfield (2025) argues that while early AI was “junk” for verification, the addition of search capabilities has changed the game. He calls this new paradigm scaffolded search: a process whereby AI assists the user in navigating, summarizing, and, most importantly, critiquing complex search results.

Caulfield’s Deep Background GPT (generative pre-trained transformer) offers a solution to the silos previously mentioned and what Sam described in his research paper. In this context, it functions like a specialized application within ChatGPT and is designed with specific instructions, constraints, and goals rather than as a general-purpose chatbot. Deep Background is built upon the SIFT methodology (stop, investigate the source, find better coverage, and trace the original context). Deep Background is like a co-reasoning partner that can assist a researcher by critically analyzing information while being self-critical to help find potential biases.

Cleaning Your Digital Mirror

Clearing your web browser’s cache is a start, but if you want to truly pop the filter bubble, you may want to try the “nuke” option that many platforms now offer to clean the “digital mirror” that algorithms have built. On TikTok or Instagram, for example, users can refresh their For You feed in their preferences. This forces the algorithm to forget past engagement and treat the user as if they were new.

Another option is to switch social media feeds from For You to the Following or Most Recent options. Doing so disables much of the AI-driven curation, which is often optimized to promote controversial or polarizing content, and restores a feed based on accounts you initially chose to follow. I do this occasionally, mostly out of curiosity, but users can also take a more deliberate approach by intentionally engaging with content from different perspectives. Over time, even small acts of intentional engagement can reintroduce diversity into an otherwise narrowed feed.

A Guide to Finding the Truth

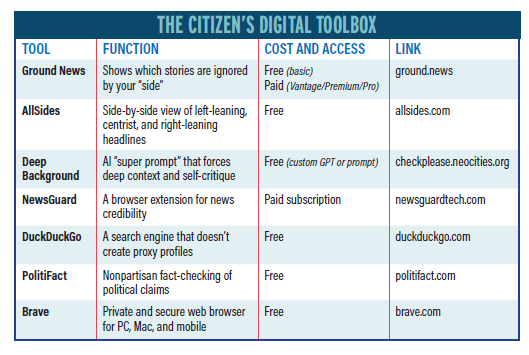

Navigating the internet today requires curiosity; however, finding truth grounded in facts demands that a researcher develop a thorough and systematic research process to filter out the noise. The following are some search tips and tools to use when navigating the internet:

- Detect blind spots—Before forming an opinion, use applications such as Ground News to see if a story is being covered by both sides. If only one side is talking about an event or an issue, then it is highly probable that it is missing context.

- Compare the headlines—Visit AllSides to read headlines side-by-side. AllSides’ mission, per its About page, is to “help people better understand the world—and each other.”

- Verify the source—Before citing a source, check its NewsGuard rating or visit PolitiFact. If a source has a history of publishing unverifiable content, you should disregard it.

- Depolarize your search—Use the DuckDuckGo search engine or the Brave web browser for sensitive topics. This ensures that you get a clean set of results that hasn’t been tailored to your personal yoga mat or holster profile.

Moving Toward Respectful Rhetoric

The conclusion of Sam’s paper resonated with me, particularly his call to seek solutions in which “respect is the utmost priority.” Respect requires understanding, and yet, true understanding becomes difficult when our technology is designed to keep us divided. Sam also identifies political literacy as a civic duty. I would add that algorithmic literacy must be part of that as well. We are not only grappling with a two-party system, but also with an engagement-driven machine that profits from our division. If we hope to address polarization, we must begin by popping our own filter bubbles. We—and by “we,” I mean librarians and information professionals—must reach across the digital divide, even when the algorithm whispers that the person on the other side is our enemy and not simply another person shaped by a different information stream.

This column was born from a lunchtime conversation between my son and me, during which he identified the political hardware (i.e., the system) that divides us. It became my goal to briefly explore the software (i.e., the information) that is weaponizing our division, while also providing some resources to help with your quest to recognize, question, and, ultimately, resist the informational forces that profit from keeping us apart. As Caulfield’s scaffolded search reminds us, we have the tools to move beyond public outrage, but we must also have the will and understanding to use them responsibly and correctly. We owe it to the next generation, for students like Sam, to ensure that our technology serves as a window to a shared reality, rather than as a mirror that reflects only our own fears and biases.

|